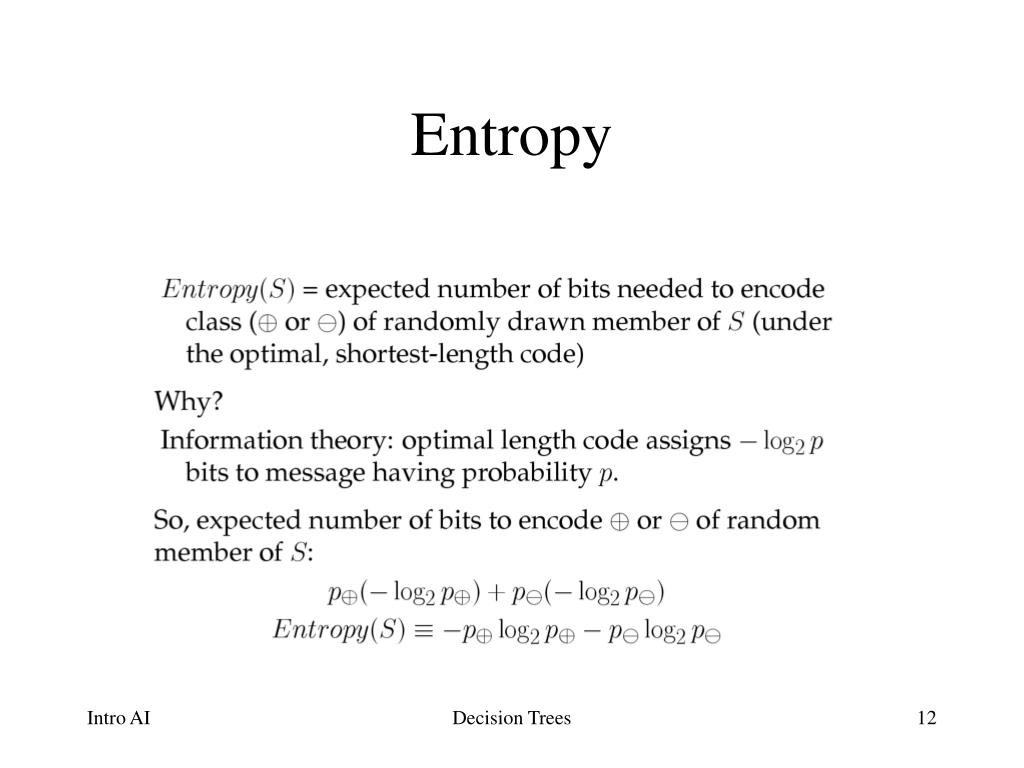

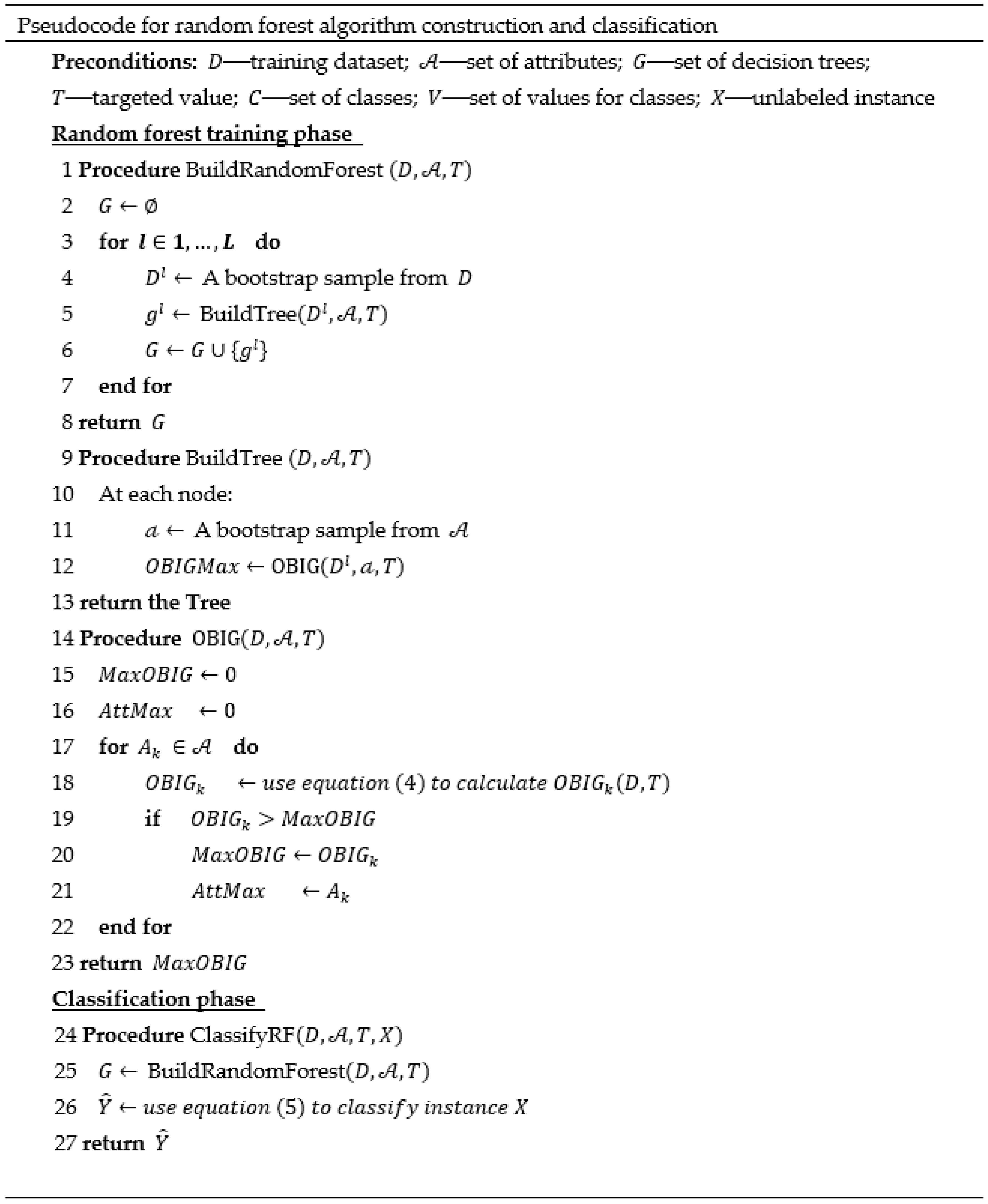

The difference between the entropy of the original set and the weighted sum of the entropies of the subsets is thus the information gain.Įntropy quantifies the disorder or impurity present in a collection of instances and aims to be minimized by identifying the ideal division.īy choosing the feature with the maximum information gain, the objective of information gain is to maximize the utility of a feature for categorization.Įntropy is typically taken into account by decision trees for determining the best split.ĭecision trees frequently employ information gain as a criterion for choosing the optimal feature to split on.Įntropy usually favors splits that result in balanced subgroups. It gauges the amount of knowledge a characteristic imparts to the class of an example.Įntropy is calculated for a set of examples by calculating the probability of each class in the set and using that information in the entropy calculation.īy dividing the collection of instances depending on the feature and calculating the entropies of the resulting subsets, information gain is determined for each feature. Information gain is a metric for the entropy reduction brought about by segmenting a set of instances according to a feature. It determines the usual amount of information needed to classify a sample taken from the collection. Key Differences between Entropy and Information GainĮntropy is a measurement of the disorder or impurity of a set of occurrences. The amount by which the dataset will be divided based on characteristic X will be shown by the information gain that results. These two subsets' entropies, Entropy(S1) and Entropy(S2), can be determined using the formula we previously covered. Where S1 is the subset of data where feature X takes a value of 0, and S2 is the subset of data where feature X takes a value of 1. The following formula is used to compute entropy − In essence, it is a method of calculating the degree of uncertainty in a given dataset. Entropy is a measurement of a data set's impurity in the context of machine learning. The term "entropy" comes from the study of thermodynamics, and it describes how chaotic or unpredictable a system is. In this article, we'll examine the main distinctions between entropy and information gain and how they affect machine learning. Entropy can be used in data science, for instance, to assess the variety or unpredictable nature of a dataset, whereas Information Gain can assist in identifying the qualities that would be most useful to include in a model. People can handle difficult situations and make wise judgments across a variety of disciplines when they have a solid understanding of these principles.

Information gain is the amount of knowledge acquired during a certain decision or action, whereas entropy is a measure of uncertainty or unpredictability. Entropy and information gain are key concepts in domains such as information theory, data science, and machine learning.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed